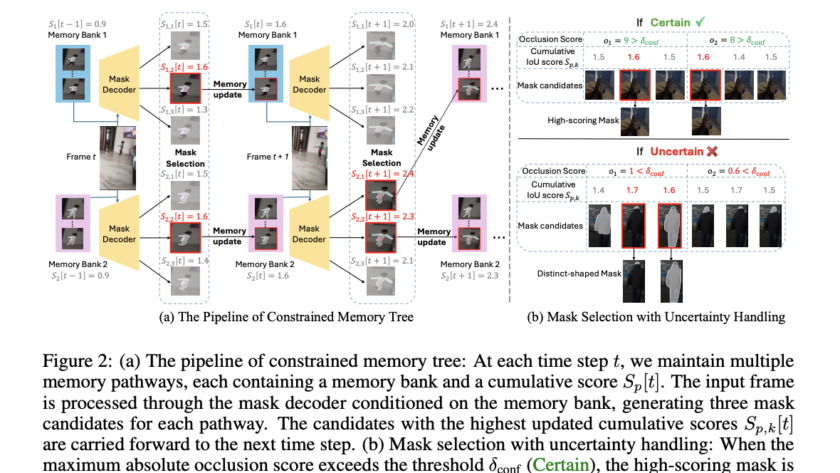

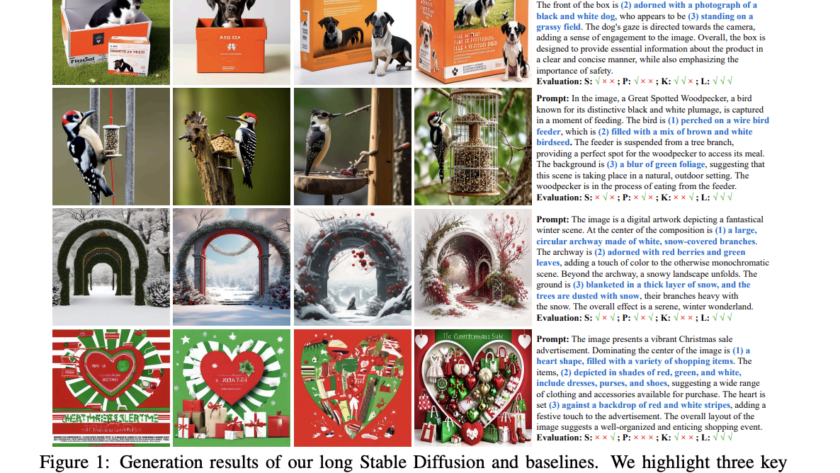

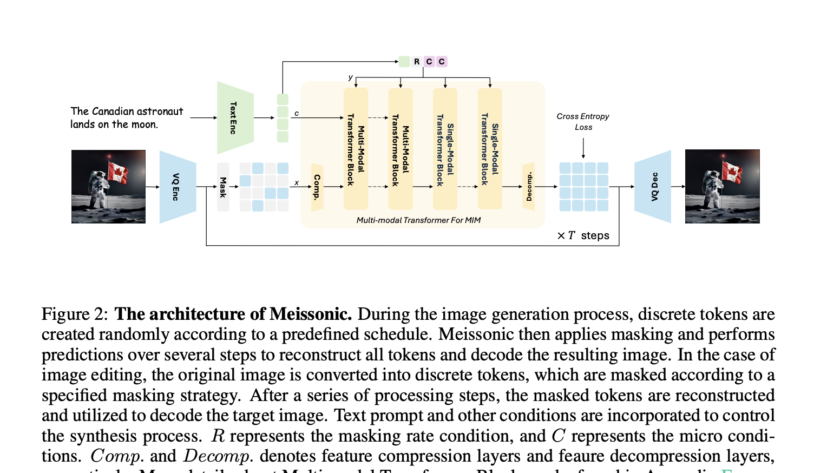

Diffusion models have pulled ahead of others in text-to-image generation. With continuous research in this field over the past year, we can now generate high-resolution, realistic images that are indistinguishable from authentic images. However, with the increasing quality of the hyperrealistic images model, parameters are also escalating, and this trend results in high training and…